DataCenters in Space

#166 - Why the future of compute may start above the atmosphere

Hello friends, I hope you had a great week!

A few weeks ago, when Elon Musk started hinting at the idea on X, I ended up talking about it over dinner with friends. None of us are physicists, but we all had the same reaction: is this just hard, or is it actually a good idea? Someone dropped a tweet in our WhatsApp group about data centers on the Moon. We traded a few messages, laughed about how extreme it sounded, and moved on with our lives.

That was supposed to be the end of it. Then I kept seeing more people talk about it… not in a sci-fi tone, but in a “this could actually work” tone. And then I remembered something I’d heard years ago from Jeff Bezos. He talked about his long-term vision for humanity: over time, we should move the heavy industrial activity off Earth and into space. The idea was simple and a bit crazy: Earth becomes a residential zone, and manufacturing moves to orbit or the Moon. At the time, I filed it away under “billionaire long-horizon visions” and assumed it belonged to a 50- or 100-year timeline.

But writing this post made me realize something I hadn’t appreciated back then. It’s happening. Not in the full Bezos sense (yet), but in a meaningful first step. The conversation around orbital compute is not fictional. It is emerging from real constraints we’re hitting on the ground. It stopped feeling like a thought experiment and started feeling like the early version of the thing Bezos was pointing to.

If you break AI infrastructure down to first principles, you don’t get many variables. Power, cooling, connectivity, and chips. Everything else is buildings, zoning, and accounting. On Earth, all four of the core variables are hitting friction. Power grids are overloaded. Cooling is turning into a limiting factor. Land is expensive or politically contentious. And the next generation of racks looks more like industrial machinery than “servers in a room.”

Seen from that angle, putting compute in orbit stops looking like a stunt. It becomes a clean way to ask where the real bottlenecks are, who benefits if orbital data centers work, and who is holding the risk if they do.

The AI boom hit a physical wall

The last two years turned AI from a software story into an infrastructure story. Models got bigger, but the real shift happened underneath: data centers went from being a cost center to a strategic asset, and the physical limits around them became visible. The industry now talks about GPUs, power plants, land rights, substations, and cooling in the same breath. That’s usually a sign that the bottleneck moved from code to physics.

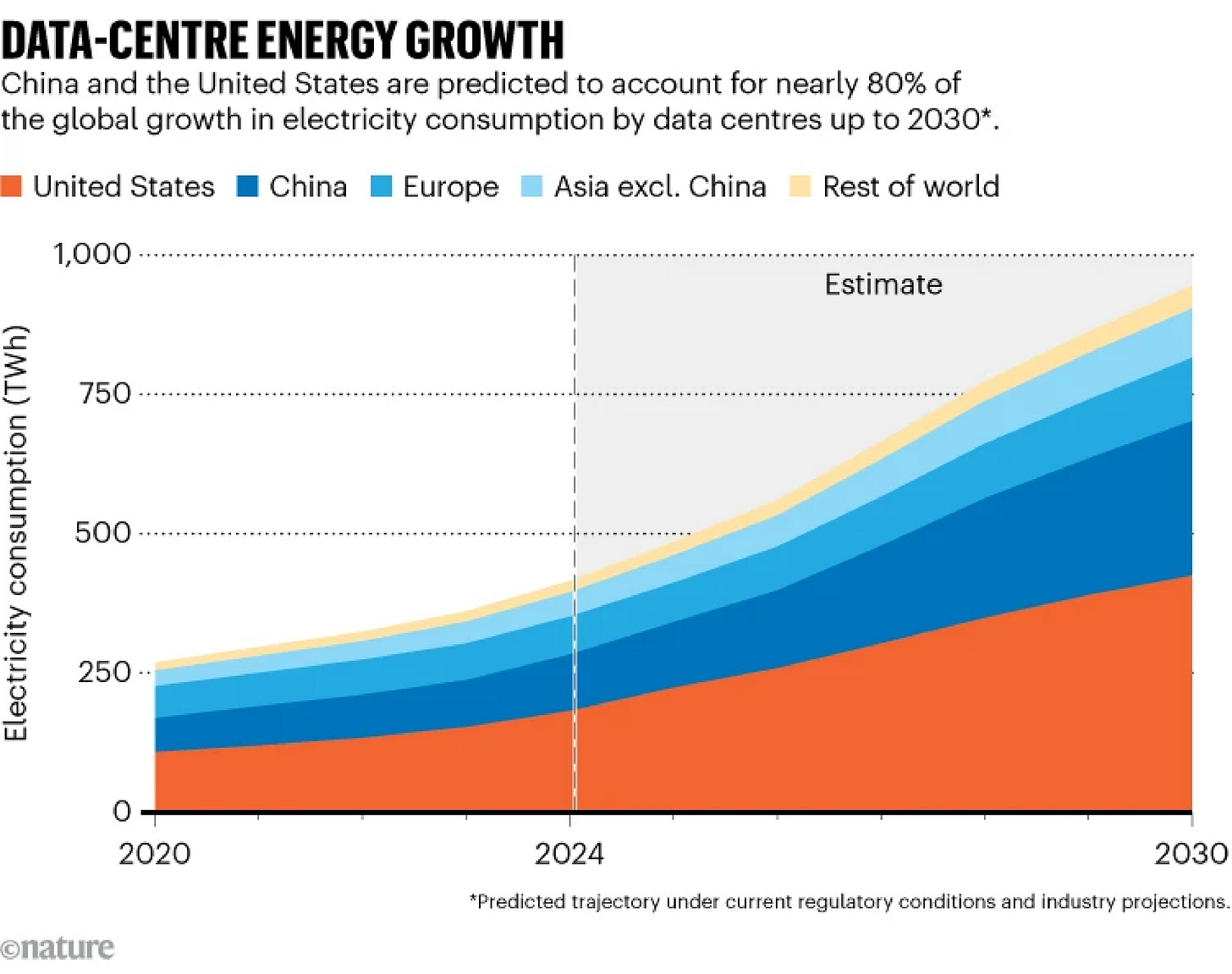

The first wall is power. A single cutting-edge training cluster can demand hundreds of megawatts. Utilities in the US report multi-gigawatt requests from AI players, and annual power demand from data centers is forecast to roughly double by 2030. According to these estimates, in 2030 data centers will consume more electricity than Japan!

Blackwell-class racks can pull more than 100 kilowatts each. Buildings designed ten years ago cannot absorb that kind of density without major retrofitting.

Cooling is the second wall. Water-cooled systems, immersion cooling, and custom liquid loops are becoming mandatory for high-performance clusters. These systems are expensive, complex, and energy-intensive. They also require space, permitting, and operational oversight that look more like chemical plants than tech campuses. The more power you need, the more cooling you need, and the more land and political capital you consume.

The third wall is local friction. Many towns don’t want a 50-acre concrete box that contributes little employment and demands a large share of grid capacity. Siting battles slow everything down. The cheapest watt is the one you never have to negotiate with a municipality for.

This is the backdrop for the renewed interest in orbit. When your constraints come from physics and local politics, it’s natural to step back and ask whether Earth is the wrong venue. Orbit isn’t free or simple, but it removes several of the forces that now define the economics of AI infrastructure.

First principle: power in orbit is structurally better

If you follow power economics long enough, you end up with a simple idea: AI is a conversion problem. You turn electricity into tokens. The cheapest and most reliable watt wins.

Space changes that equation in ways that are hard to replicate on Earth. Solar power in orbit is continuous and predictable. No clouds, no seasons, no night. Panels receive far higher effective irradiance because they operate close to full duty cycle. That means no massive batteries and no constant engineering effort to manage intermittency.

This is why space-based solar has been discussed for decades. It solves the hardest part of renewables: stability. AI workloads care about the same thing. Training and inference need steady baseload power, not cheap energy that disappears at sunset.

There’s also a grid advantage. On Earth, hyperscalers compete for substation capacity and transmission rights, often waiting years for upgrades. In orbit, power can be generated and consumed in the same system, bypassing one of the biggest bottlenecks in infrastructure buildout.

Finally, space removes local friction. Large solar projects on Earth face land constraints, permitting delays, and political opposition. None of that exists in orbit.

Orbital power isn’t cheap today. Launch costs and system complexity are real. But the direction of travel matters. If AI demand keeps growing, the environment with the best power profile becomes increasingly attractive. Earth’s energy system wasn’t built for this workload. Orbit offers a clean slate.

First principle: cooling and density favor space

Cooling is where the economics of AI data centers start to break. A growing share of capex and operating cost is no longer the compute itself but the systems that keep it from overheating. High-end clusters already require liquid cooling, with immersion tanks, cold plates, chillers, pumps, and heat exchangers turning data centers into industrial facilities rather than server rooms.

On Earth, cooling compounds with density. More compute means more cooling, more space, more permitting, and more local friction. Water use has become a visible constraint, especially during heat waves. As racks approach 120–150 kilowatts, these trade-offs stop being marginal.

Orbit changes the setup. You don’t cool with air or water. You reject heat through radiators into vacuum. It’s not trivial, but it avoids heat waves, humidity, noise limits, water rights, and negotiations with municipalities. Cooling becomes an engineering problem rather than a political one.

This matters for density. On Earth, density eventually hits municipal limits even if the hardware can handle it. In orbit, the ceiling is mostly technical. If heat can be managed and systems maintained, clusters can scale far beyond what suburban power and cooling infrastructure allows.

The strategic implication is simple. If AI demand keeps rising, the binding constraint won’t be GPUs. It will be the combination of power, cooling, and zoning. Orbit removes zoning, reshapes cooling economics, and expands the available engineering headroom.

I’ll be the first to say that I don’t have the physics background to judge this on instinct. My initial reaction was the same as most people’s: “How can cooling in space possibly work if there’s no air?” I asked ChatGPT to walk me through the basics, expecting to confirm the conventional view. Instead, I ended up convinced that the first-principles argument is at least reasonable once you factor in radiative cooling at higher temperatures and the hard limits the Earth environment is running into. That said, I’m fully aware this is a complicated topic, and I’m happy to be challenged by people who actually work in thermal engineering or spacecraft design. If you think I’m missing something important, reply to the newsletter or message me and explain it to me. I’d genuinely like to learn.

First principle: networking, latency, and the user path

The first objection to data centers in orbit is usually latency. Signals travel farther. That sounds fatal until you look at how networks actually work today. A simple request already passes through multiple hops: device, cell tower, metro fiber, regional exchange, data center, and back again. Latency is as much about routing and congestion as raw distance.

Satellites change the geometry. Devices can connect directly to orbital nodes, especially with direct-to-cell hardware. The round trip to low Earth orbit is longer than to a nearby data center, but not by orders of magnitude. For most inference tasks, the difference is acceptable. You’re not training large models on a phone. You’re asking for small, latency-tolerant outputs.

Space also enables cleaner routing. Inter-satellite laser links let data move across the network without touching Earth until delivery. That avoids congestion, national choke points, and undersea cable politics, and reduces the number of intermediate hops that add jitter.

Coverage matters too. A constellation can serve rural areas that will never get fiber and stay operational during outages or extreme weather. As inference moves closer to the edge, orbit becomes a natural extension of the network rather than an exotic alternative.

The strategic shift is this: for some AI workloads, the user path may move from “device → internet → data center” to “device → sky → compute.” Traditional data centers don’t disappear, but they stop being the default endpoint. That’s why networking belongs in the same first-principles discussion as power and cooling. Once satellites become part of the inference chain, compute in orbit looks less like a stunt and more like a continuation of trends already underway.

The hard part: launch costs, integration, and who actually wins

Everything so far describes why orbit looks attractive on paper. The hard part is turning the physics advantage into an actual system. Launch costs are the first hurdle. Even with reusable rockets, lifting heavy hardware into orbit is expensive. A large-scale data center isn’t a small CubeSat. It’s tonnes of panels, radiators, compute modules, shielding, and assembly equipment. The economics change only if launch capacity becomes abundant and cheap enough to treat heavy-lift as routine.

Radiation is the second hurdle. Hardware in orbit needs protection from cosmic rays and solar storms. Terrestrial servers don’t face the same environment. Shielding adds weight, complexity, and cost. You also can’t send a repair crew with a van and a ladder. Reliability must be engineered in from the start. Redundancy, modular replacement, and long-lived components become mandatory rather than optional.

Maintenance is another unknown. You can design orbital systems for robotic servicing, but that ecosystem barely exists at scale. If an array fails or a module overheats, the remediation plan can’t involve waiting six months for a launch window. Space demands a different approach to fault tolerance.

Then there’s integration. Running compute in orbit only works if the operator controls three layers: rockets, satellites, and AI infrastructure. Very few companies sit at that intersection. SpaceX is the obvious one. Starship offers massive lift capacity. Starlink provides the communications fabric. xAI and Tesla supply the demand for compute. That level of vertical integration creates an advantage that looks difficult to match. Traditional hyperscalers buy land and negotiate grid hookups. They don’t own rockets. They don’t operate constellations. Their scaling path is tied to Earth’s permitting cycles and energy politics.

Governments will play a role too. National regulators will care about orbital congestion, power beaming, debris mitigation, and spectrum use. Countries might see orbital compute as strategic infrastructure and could even subsidize it. That cuts both ways. Government support can accelerate deployment but also adds compliance and geopolitical friction.

The question for incumbents is simple. If orbital compute becomes economically credible, even at small scale, does it erode the foundations of their future capex plans? The hyperscalers are committing tens of billions to terrestrial campuses that assume Earth remains the only viable venue. If that assumption weakens, so does the certainty of their returns.

Orbit isn’t easy. But difficulty isn’t the relevant test. The relevant test is whether the path is getting easier faster than Earth-based scaling is getting harder. Right now, that gap is narrowing.

If this is even partially right, the risk sits in the assumptions

One of the more interesting reactions to the “data centers in space” idea came from SemiAnalysis, pointing out that space has zero thermal conductivity, so you only cool through radiation, which requires huge radiators. It’s a fair objection. Space doesn’t magically cool hardware. You don’t get to dump heat into air or water. You need engineered radiators, and they need to be large.

But then you get Musk’s reply in the thread:

“Radiative cooling rising as T⁴ really favors higher operating temperatures. Ultimately, solar-powered AI satellites are the only way to achieve a Kardashev-II civilization.”

And that comment shifts the discussion from “is this practical today?” to “what direction of travel aligns with physics at scale?”

A Kardashev Type II civilization is one that can harness most of the energy output of its star. It’s not about science fiction tropes. It’s a simple metric for how much energy a society can collect and use. Today’s AI trajectory points toward orders of magnitude more compute and orders of magnitude more energy. At some point, the terrestrial grid becomes the wrong abstraction. If energy and cooling are the real limiters of intelligence at scale, the system gravitates toward the venue that has the most energy and the cleanest heat-rejection environment.

That’s the deeper point behind the Musk quote.

Not “we’re building Dyson spheres next year,” but “the direction of optimization moves outward.” Once you take seriously the idea that tokens per watt is the governing metric, it becomes hard to defend a future where all compute stays on Earth.

This doesn’t mean orbital AI is imminent. It means the opportunity cost of ignoring it is rising. Hyperscalers are investing tens of billions into land, substations, water rights, and cooling systems that assume the next decade of scaling happens on the ground. If even a fraction of the physics-based argument turns out to be right, those investments carry more risk than the market currently prices in.

Orbit is not easy. The engineering is hard, the economics depend on launch costs, and the entire architecture has to be rethought. But difficulty is not a moat. Physics tends to win over incumbents. And the people thinking most aggressively about the long-term energy profile of AI aren’t imagining bigger warehouses — they’re imagining a network of solar-powered compute nodes above the atmosphere.

Factories on the moon

A funny thing happened while I was finishing this piece. On the way to school, I started talking about it with my kids. I sometimes tell them what I’m writing for the week, partly because I hope it sparks their imagination and partly because kids tend to give genuinely original angles that adults no longer produce.

I simplified it: “Imagine a future where factories move to the Moon.”

They immediately went into problem-solving mode.

Lulu asked:

“But how do you stop the factories from floating away if the gravity is low?”

And Coco added:

“I know how you should send the things for the factory. You shouldn’t put the bricks and the tools in the same rocket as the people. You should put them in another rocket and attach it with a chain.”

Both comments made me smile. And both comments reminded me why I like talking about these topics with them. They don’t start from constraints. They start from possibility, then worry about constraints later.

Most of the time, adults talk themselves out of interesting ideas long before the physics or the economics even get a vote. Kids don’t. Which is probably why they’re often closer to the mindset needed to imagine systems we haven’t built yet.

Have a great weekend!

Giovanni

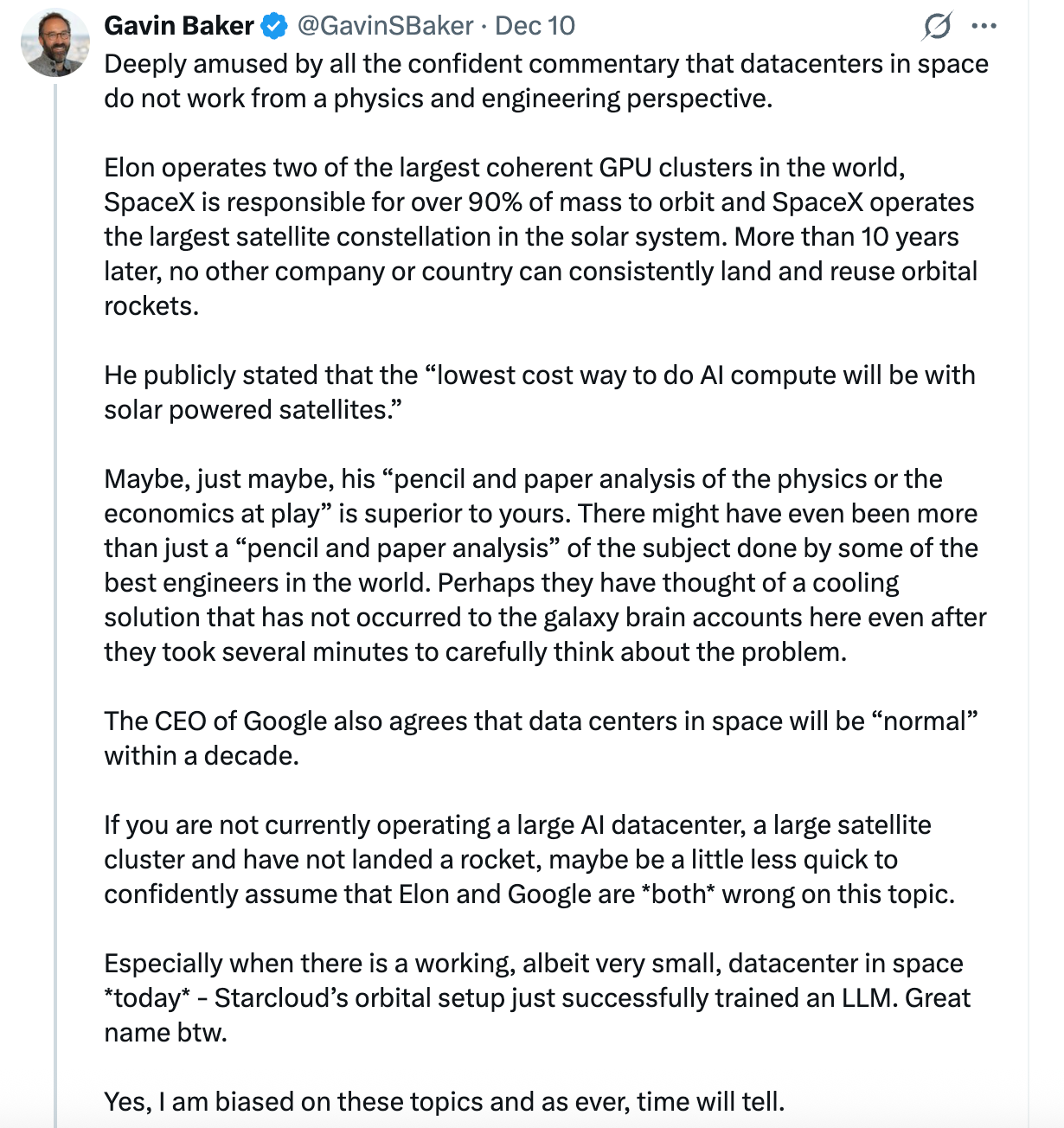

p.s. as usual on X there’s a lot of naysayers and “this is impossible, it’s physics 101”. I really agree with this tweet though: Maybe, just maybe, I trust Elon and Google CEO a bit more than “random PhD” expert on X :)