Hello friends! I hope you are having a great weekend. This week I want to write about something that has been sitting on my mind for a very long time. I wrote about Superintelligence and the risk of a “fast take-off” in AI a few weeks ago, and today I would like to cover a bit better “how do we make sure AI does not kill us all??”.

I planned to cover this for a very long time, and I have gathered a lot of content and thoughts on it but I finally decided to write this post also because a couple weeks ago Neuralink (Elon Musk’s brain implant company) announced they had done the first implant of their device “Telepathy” in a human brain… and I think this really ties to one of the aspects of AI alignment that I will expand at the end of the post!

Drawing Parallels: Atomic Energy and AGI

I really enjoyed watching "Oppenheimer" a few months ago and while I was watching it was hard not to see in it a powerful analogy for our current journey with AGI. Just like the atomic bomb was a groundbreaking yet daunting development, AGI presents a similar mix of excitement and uncertainty. Both represent monumental leaps in human capability, pushing the boundaries of what we previously thought possible.

In "Oppenheimer," we see the internal struggle and the moral implications of creating something as potent as the atomic bomb. It's this same sense of venturing into uncharted territory that a lot of people argue we are facing with AGI. With atomic energy, the challenge was managing a force that had the potential to either revolutionise energy use or lead to unparalleled destruction.

The film captures the moment of realization – when the creators of the atomic bomb understood the magnitude of what they had unleashed. This is a crucial lesson for us in the AGI space. We're not just building sophisticated algorithms; we're potentially giving birth to a new form of intelligence. It begs the question: Are we prepared to manage and guide this intelligence responsibly, or will we find ourselves grappling with a force that's beyond our control?

Just as the world had to come to terms with the implications of atomic energy – setting international protocols and safety measures – a lot of people argue we need to take similar steps with AGI. It's about foresight, ethical considerations, and global cooperation.

What do we mean by Alignment (or Containment)?

When we talk about Alignment or Containment in the context of AI, we're ensuring that AI systems not only understand but also adhere to human values and goals. It's about creating a harmonious relationship between AI's capabilities and humanity's interests, so that as AI becomes more integral to our lives, it supports rather than undermines human well-being.

Alignment is the process of ensuring that AI systems' objectives and actions are in sync with human values. It's like teaching a child the difference between right and wrong. Just as we want a child to grow up with a moral compass that guides them towards positive actions, we want AI to develop with an understanding of human ethics and priorities. This means instilling in AI systems a nuanced comprehension of human values, ethics, and the diverse tapestry of human culture and society.

Containment, on the other hand, is more about control and safety mechanisms. It involves setting boundaries for AI behavior and capabilities to prevent unintended consequences. Think of it as installing guardrails on a highway; they are there to keep the vehicles on the right path and prevent accidents. Containment strategies might include limiting AI's access to certain data, restricting its ability to act independently, or implementing failsafe protocols that can shut down or alter the AI's course of action if it starts veering off track. The book The Coming Wave (written by one of DeepMind’s founders) has a lot of interesting takes on containment.

Both Alignment and Containment are essential in the development of AI. They represent the two pillars of creating AI systems that are not only powerful and intelligent but also safe, ethical, and beneficial to humanity.

The Urgency of AI Alignment and Containment

One might argue “do we really have to worry about this now?”, and frankly some very smart people like Nick Bostrom (the person who wrote the book I quoted on Superintelligence, somebody very close with the nuances of AGI) are of this opinion.

Bostrom's latest reflections pivot around a critical concern: the balance between recognizing AI's potential risks and not stifling its development with excessive fear.

One of Bostrom's key worries is the potential for society to react too strongly to the perceived dangers of AI. He fears that an overly cautious approach could lead to a "permafrost" scenario, where AI development is effectively halted. This could prevent humanity from realizing the potential benefits of AI, essentially cutting off a path to a potentially transformative future.

Bostrom's caution is not about dismissing the risks but rather about finding a "Goldilocks" level of concern – enough to ensure safety and ethical development, but not so much that it hinders progress. His concern is that public and policy attitudes toward AI might swing towards an extreme, driven by a fear-based mentality, potentially leading to stringent restrictions or even a ban on AI research.

On the other side though, there are some signals that call for urgency to focus and discuss about Alignment and Containment:

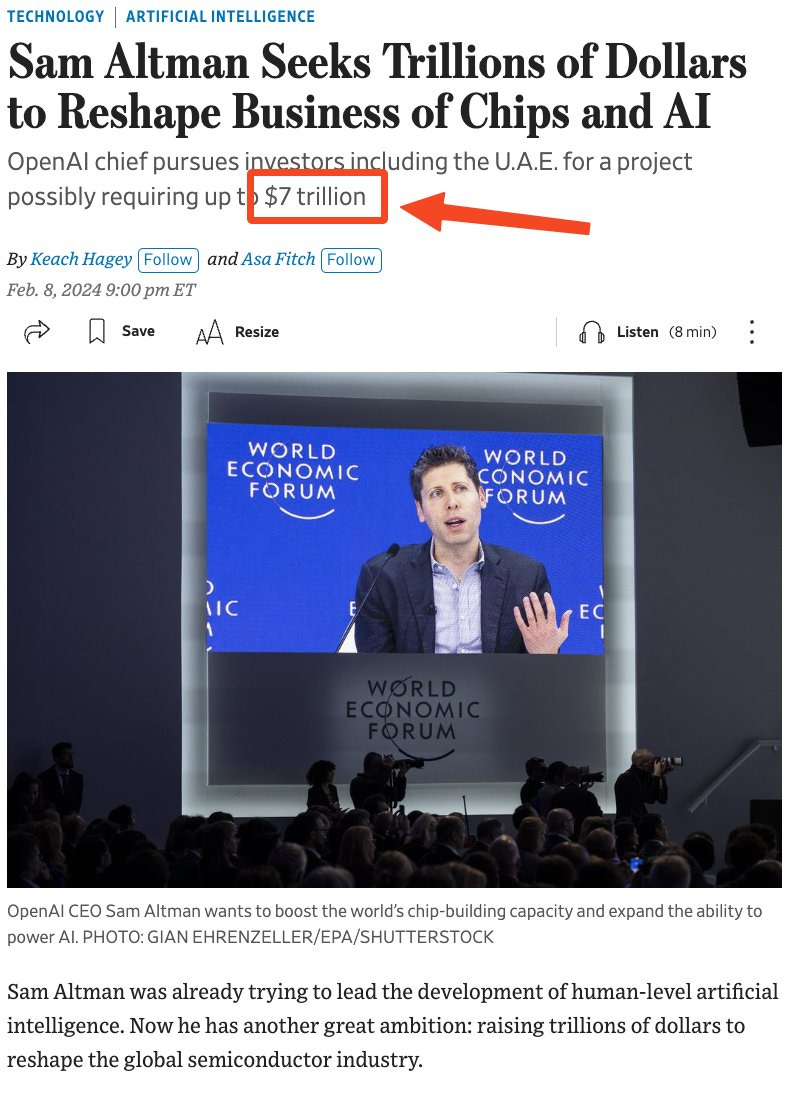

Accelerating Pace of AI Development: AI is advancing rapidly, finding its way into various aspects of our lives. This rapid development means we have less time to address alignment issues before they become problematic. Just to provide a signal of how fast we’re going, Sam Altman is currently trying to raise $7 Trillion (!!!!) which is 10% of World GDP, to build AI-focused chips….

Approaching the Point of No Return: Similar to the "Oppenheimer" moment in atomic energy development, we may be nearing a point of no return with AI. The development of AGI could be a watershed moment, altering the trajectory of human history. It's crucial to address alignment issues before reaching this critical juncture.

Complexity of Predicting Timelines: Predicting the exact timeline for the advent of AGI is challenging. The lack of a precise timeline should not, however, be an excuse for inaction. Like preparing for a natural disaster, the unpredictability of its occurrence doesn’t lessen the need for preparedness.

Autonomous vehicles offer a compelling real-world example of why AI alignment challenges are pressing. For instance, imagine a situation where a vehicle must choose between swerving to avoid a pedestrian, potentially causing a collision with another vehicle, or staying its course, risking the pedestrian's life. These situations highlight the complexity of programming AI to make ethical decisions, mirroring real-life dilemmas.

Public understanding and engagement are crucial in shaping AI development. Awareness can lead to pressure on developers and policymakers to prioritize ethical considerations in AI development.

AI Safety Summit Highlights: A Global Call for Caution

At the end of 2023 the UK Government seemed to be receptive of this call for action and organized a Summit hosted at Bletchley Park in London. This is not a random building but where AI history took its first steps: during WWII, codebreakers like Alan Turing cracked the Enigma code (the cipher system the Nazi Germany used during the war) with groundbreaking machines and techniques. These early "thinking machines" laid the foundation for modern AI, from cryptography to deep learning. Sunak gathered world leaders (and invited Elon) where AI was born.

As most of these summits the agenda revolved more around long term goal setting and establishing a collaboration platform. But the focus I believe is a testament to how big of a problem this is, and will likely be even more, in the global political landscape.

In the summit there was a consensus on the need for more proactive, risk-based, and internationally collaborative action to build safe frontier AI. This includes developing a shared scientific understanding of AI risks and building risk-based policies to ensure safety.

Anthropic's Innovative Approach: An AI Constitution

While necessary I am not a huge fan of relying solely on state-driven regulation on these topics, because often policy makers are slow to react and struggle to move as fast as the industry would require in these contexts. That’s why an interesting take I found on the Containment issue is what Anthropic (one of the key players in the AGI race) is doing.

They have introduced a novel concept: the design of an "AI constitution" with their AI model, Claude2. The idea behind an AI constitution is to embed a set of foundational principles and guidelines directly into the AI's operational framework. This is akin to setting a moral and ethical compass for the AI, guiding its decision-making processes and ensuring its actions are aligned with human values and safety standards.

By incorporating a set of guiding principles within the AI itself, the approach seeks to ensure that the AI's actions remain consistent with intended ethical standards, even as it continues to learn and evolve.

What is Human?

One of the reason why this topic of AI alignment fascinates me is that at the end of the day it requires us answering one of the fundamental questions humanity has always posed: what makes us “human”?

And interestingly a couple of months back I read (randomly, not because I wanted to read it related to AI) Dostoevsky "Notes from Underground". And like I am learning more and more as I read Dostoevsky’s production, he understood humans like few people have!

In the book he offers a perspective on what it means to be human and offers 2 specific traits:

Free Will: Dostoevsky discusses the concept of free will, pondering its value and relationship with reason. He notes that while free will can be aligned with reason and moderation, it often defies rationality. This contradiction is a fundamental aspect of human nature, where irrational choices can be as significant as rational ones. It raises a question: Can AI systems truly replicate the depth and unpredictability of human free will?

“Some say that the most important thing for a man is his free will. It can be reasoned with, and that's good, but often it acts contrary to reason, and... you know, that's good too."

The Importance of Suffering: The author also focuses on the role of suffering in the human experience. He challenges the notion that well-being is the sole good for humans, suggesting that humans might equally value suffering. Dostoevsky highlights that suffering can be as beneficial as well-being, with humans sometimes even craving it. This insight complicates the task of aligning AI with human values. If AI is to emulate human consciousness, how can it understand and incorporate the complex, often contradictory nature of human suffering and joy?

Why are you so sure that only the normal and the positive – in other words, only well-being – is to man's advantage? Perhaps suffering is just as great a benefit to him."

While reading these words I began to think about what this means for AI development, and how this is not a “simple containment problem” (which was probably more the case for nuclear energy for instance) but rather something that has to do with the intricacies of human conscience and emotions, challenging the idea of programming AI with a clear-cut set of human values.

Does AI need a body?

An alternative perspective on "what makes us human" suggests that a key element AI must embody is our sensory experiences. Humans are beings of senses and physicality; our bodily experiences are integral to our identity. This is why for instance some AI experts (and Elon Musk is a big believer of this) argue that for AI to fully understand us and align with us we need to give them Human forms. We need AI systems to “see” (that’s why for instance Tesla AI is so focused on computer vision vs radar systems, humans see through eyes not radars), have a body (that’s why he’s building humanoid form robots) and experience the world.

While OpenAI and Google were focusing on creating text-based chatbots, Musk decided to focus on artificial intelligence systems that operated in the physical world, such as robots and cars.

The next step on this conceptual framework is that we could allow AI to “connect with humans” at a body level… and that’s when last week’s news of Neuralink’s brain implant becomes a key evolution.

Telepathy and Neuralink: The Frontier of Human-AI Integration

Neuralink, founded by Elon Musk, is a neurotechnology company focused on developing brain-computer interfaces (BCIs). It was born out of the need to enhance human capabilities and treat neurological disorders, but also with an eye towards the future integration of humans with AI.

As I anticipated, some people believe that Brain-Computer Interfaces like Neuralink are pivotal for AI alignment because they offer a direct communication pathway between the human brain and computers. This interface could potentially allow humans to control and guide AI systems with their thoughts, ensuring AI actions are in harmony with human intentions and ethics, thereby addressing the AI alignment challenge

And 2 weeks ago Neuralink announced they implanted their first device “Telepathy” in a human brain.

The trial involves surgically implanting micro-thin threads into the brain, designed to be minimally invasive. The implant features 1024 electrodes across 64 threads and Neuralink's goal is to enhance quality of life for individuals with movement impairments, offering a new level of independence and interaction.

The concept of merging AI with our brains is both fascinating and daunting. This direct connection to AI presents risks, yet holds immense potential. The benefits of this technology could be substantial. It offers immediate solutions for tetraplegic patients and harbors long-term possibilities for treating neurological diseases and enhancing human capabilities. This revolutionary technology could significantly alter human evolution. However, as Spiderman noted, "With great power comes great responsibility". It's crucial to balance the immense opportunities with ethical considerations and safety.

The development of AI must be guided not just by technological advancements but also by ethical and moral considerations. This includes public awareness and involvement, ensuring that AI benefits are distributed equitably, and that its development is aligned with the broader goals of enhancing human well-being.

Have a fantastic weekend!

Giovanni